Abstract

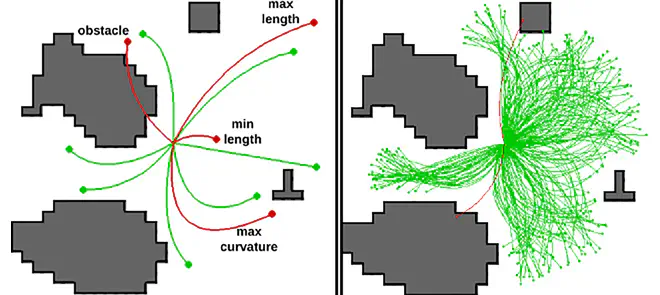

Modern cyber-physical systems are becoming increasingly complex to model, thus motivating data-driven techniques such as reinforcement learning (RL) to find appropriate control agents. However, most systems are subject to hard constraints such as safety or operational bounds. Typically, to learn to satisfy these constraints, the agent must violate them systematically, which is computationally prohibitive in most systems. Recent efforts aim to utilize feasibility models that assess whether a proposed action is feasible to avoid applying the agent’s infeasible action proposals to the system. However, these efforts focus on guaranteeing constraint satisfaction rather than the agent’s learning efficiency. To improve the learning process, we introduce action mapping, a novel approach that divides the learning process into two steps: first learn feasibility and subsequently, the objective by mapping actions into the sets of feasible actions. This paper focuses on the feasibility part by learning to generate all feasible actions through self-supervised querying of the feasibility model. We train the agent by formulating the problem as a distribution matching problem and deriving gradient estimators for different divergences. Through an illustrative example, a robotic path planning scenario, and a robotic grasping simulation, we demonstrate the agent’s proficiency in generating actions across disconnected feasible action sets. By addressing the feasibility step, this paper makes it possible to focus future work on the objective part of action mapping, paving the way for an RL framework that is both safe and efficient.